Arcee AI spent half its venture capital to build an open reasoning model that rivals Claude Opus in agent tasks

US start-up Arcee AI spent roughly half its total venture capital to train Trinity-Large-Thinking, an open reasoning model with 400 billion parameters designed to take on Claude Opus in agent tasks.

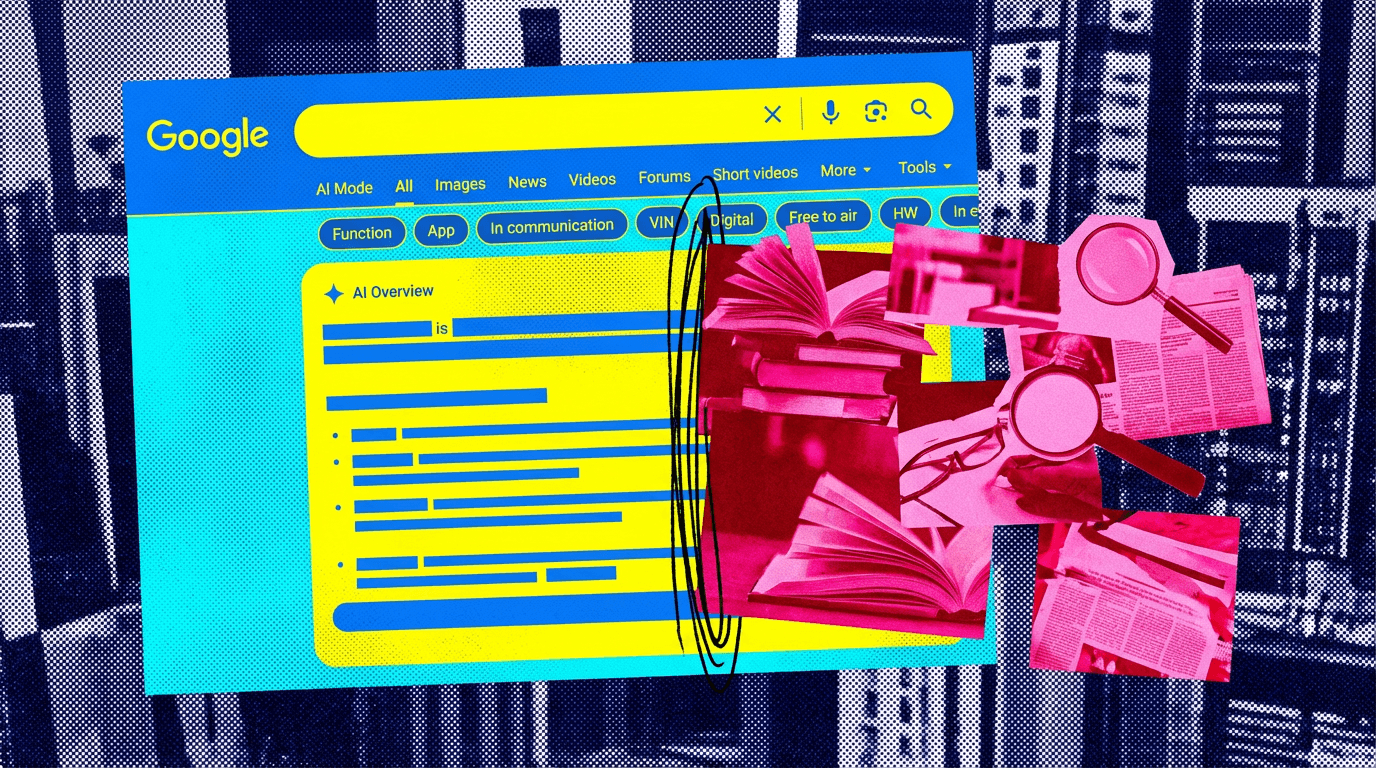

Read full article about: Deepmind CEO Hassabis says AGI will hit like ten industrial revolutions compressed into a single decade

Deepmind CEO Demis Hassabis compares the arrival of AGI to ten times the industrial revolution in a tenth of the time. "I sometimes quantify AGI as 10 times the industrial revolution at 10 times the speed. So unfolding over a decade instead of a century," Hassabis says in the 20VC podcast. He sees a "very good chance of it being within the next 5 years," an assessment that hasn't changed since 2010, when co-founder Shane Legg predicted it would take 20 years: "I think we're pretty much on track."

Getting there still requires several major advances, including continuous learning, long-term planning, better memory architectures, and greater consistency. Hassabis describes current systems as "jagged intelligences," "really amazing at certain things when you pose the question in a certain way, but if you pose a question in a slightly different way they can still fail at quite elementary things." Scaling continues to deliver results, "although they're a bit less than they were at the start of all of this scaling."

Hassabis also points to a growing perception gap. "Today and in the next year things are a bit overhyped in AI," he says. But looking further out, "it's still very underappreciated how revolutionary this is going to be in the time scale of about 10 years."

Alibaba's Qwen team built HopChain to fix how AI vision models fall apart during multi-step reasoning

When AI models reason about images, small perceptual errors compound across multiple steps and produce wrong answers. Alibaba’s HopChain framework tackles this by generating multi-stage image questions that break complex problems into linked individual steps, forcing models to verify each visual detail before drawing conclusions. The approach improves 20 out of 24 benchmarks.