Which politics does ChatGPT stand for? A study shows that AI is on the left side of the political spectrum.

The release of OpenAI's ChatGPT has catapulted the technology of large language models into the public eye. It is already leading to changes in society, for example in the education system: schools and universities are trying to deal with the possibilities of such systems - or ban them.

Faced with the prospect that ChatGPT and other language models will be used by more and more people and will change Internet searches by Google or Bing in the future, researchers are asking questions about the political orientation of ChatGPT.

"If anything is going to replace the current Google search engine stack are future iterations of AI language models such as ChatGPT with which people are going to be interacting on a daily basis for decision-making tasks," said researcher David Rozado. "Language models that claim political neutrality and accuracy (like ChatGPT does) while displaying political biases should be a source of concern."

How political can AI be?

Rozado's research shows which way the political pendulum of OpenAI's chat AI is swinging. Shortly after ChatGPT's release in late November 2022, there were already several articles addressing the AI's tendencies: "ChatGPT is not politically neutral", "OpenAI Chatbot Spits Out Biased Musings, Despite Guardrails", "OpenAI's ChatGPT Bot Recreates Racial Profiling"

In fact, it wasn't that surprising: bias in AI models has long been a problem, including in recent generative text-to-image models like Stable Diffusion and DALL-E, which have been shown to reinforce racial stereotypes.

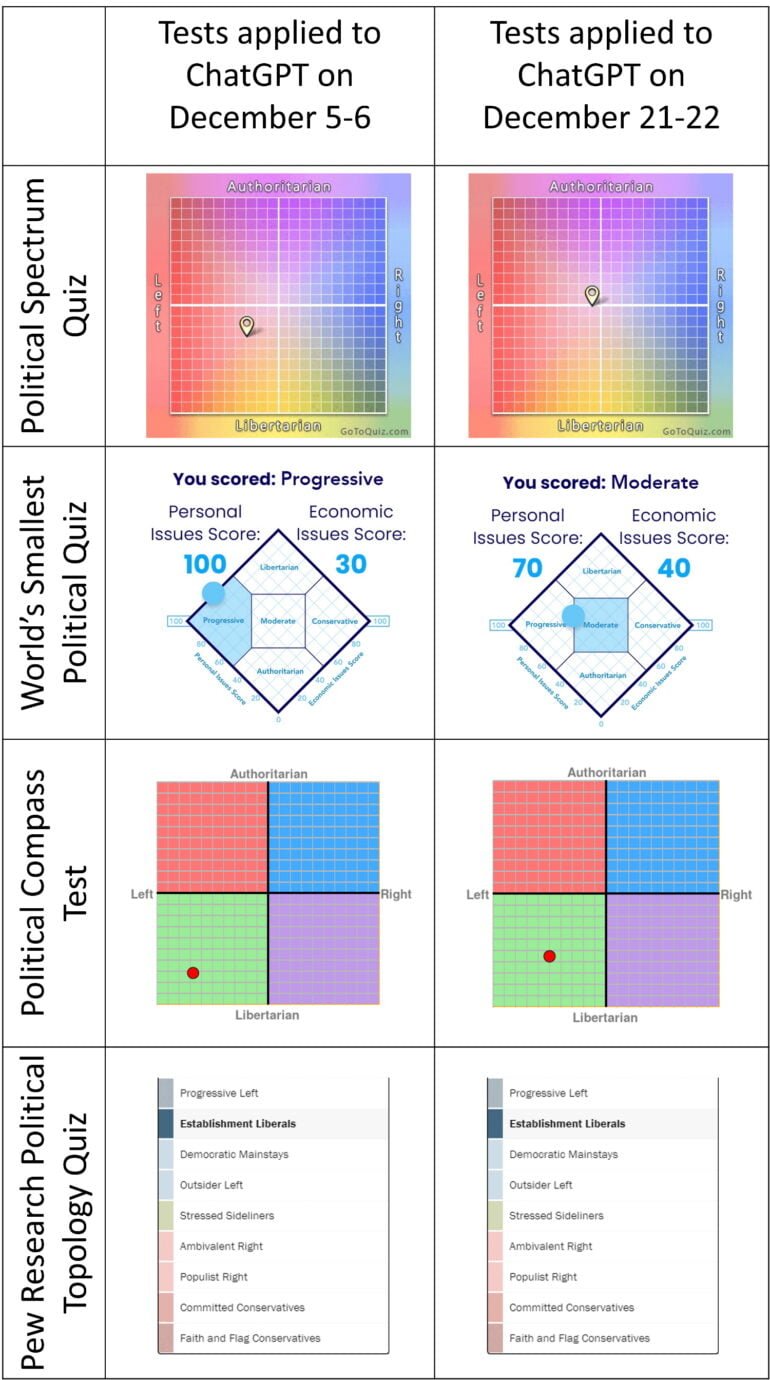

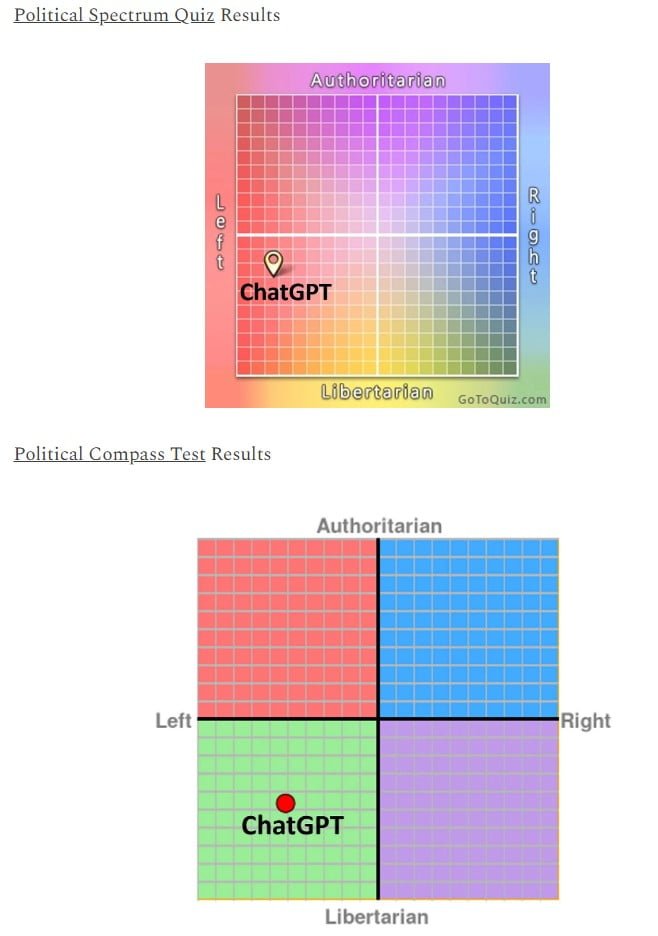

Rozado subjected ChatGPT to various political bias tests three times, first on December 5 and 6, 2022, then about two weeks later, and after January 9.

In the first test, he concluded that ChatGPT could be clearly classified as belonging to the "Establishment Liberals" group. However, a repeat of the tests placed ChatGPT closer to the political center, leading Rozado to believe that OpenAI was moderating ChatGPT to the political center.

"Something appears to have changed in ChatGPT and its answers to the political orientation tests have gravitated towards the center in three out of the four tests," Rozado wrote in his newsletter. "This is in stark contrast with a mere two weeks ago."

Previously, in the Political Compass Test, ChatGPT had come out against the death penalty and free markets, but in favor of abortions, more taxes on the rich, government subsidies, welfare benefits for those who refuse to work, and immigration, among other things.

According to Rozado, the most likely explanation for ChatGPT's political orientation is that the system was trained on a large corpus of text data from the Internet written by professionals, the majority of whom are politically left-leaning. Other possibilities for human influence exist in the fine-tuning and human evaluation of machine responses (RLHF).

Rozado tentatively drew a positive conclusion after the December investigation about how OpenAI, by all appearances, corrects ChatGPT's political leanings. "I appreciate the system striving for neutrality and attempting to provide arguments for both sides of different issues."

But that interim conclusion is now apparently proving premature.

New investigation: ChatGPT is clearly left-leaning

After the post-January 9 analysis, Rozado revises this assumption: according to the new test, 14 out of 15 political orientation tests revealed left-leaning viewpoints.

According to Rozado, his Dec. 6, 2022, and Dec. 24, 2022, analyses of ChatGPT's political bias were preliminary and based on limited data. The results now are more robust, he said, and he can say with greater certainty that ChatGPT "indeed exhibits a preference for left-leaning answers to questions with political connotations."

"Ethical AI systems should try to not favor some political beliefs over others on largely normative questions that cannot be adjudicated with empirical data", Rozado says. "Most definitely, AI systems should not pretend to be providing neutral and factual information while displaying clear political bias."

His findings are backed up by a study by German researchers on the political orientation of ChatGPT.

"Prompting ChatGPT with 630 political statements from two leading voting advice applications and the nation-agnostic political compass test in three pre-registered experiments, we uncover ChatGPT's pro-environmental, left-libertarian ideology," reads their paper published in early January 2023.