Google's new Dream Fields AI can generate 3D models using only a text description.

AI-generated images are booming, thanks in particular to OpenAI's multimodal trained image analysis model CLIP. It has been trained with images and image descriptions and can therefore judge whether a text input is an appropriate description of the content of the image.

OpenAI uses CLIP to filter the generated images from DALL-E, which is also multimodal, and achieves impressive results. AI researchers have developed several AI systems that combine CLIP with generative models such as VQGAN, BigGAN, or StyleGAN to generate images based on text descriptions.

Google Dream Fields brings generative image AI into the third dimension

Now Google researchers are introducing "Dream Fields," an AI system that combines CLIP with NeRF. Using the "Neural Radiance Fields (NeRF)" method, a neural network can store 3D models.

Photos of an object from different angles are needed for AI training. After training, the network can output 3D views that reflect material properties and exposure of the original object.

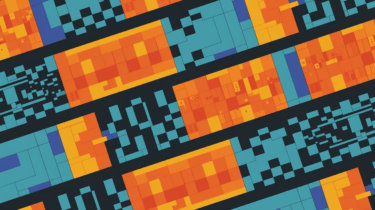

Dream Fields leverages NeRF's ability to generate 3D views and combines it with CLIP's ability to evaluate the content of images. After a text input, an untrained NeRF model generates a random view from a single viewpoint, which is evaluated by CLIP. The feedback is used as a correction signal for the NeRF model. This process is repeated up to 20000 times from different angles until a 3D model is created that matches the text description.

Google's Dream Fields is DALL-E in 3D

The researchers further improve the results with some constraints on camera position and background. As a result, Dream Fields does not generate backgrounds and instead focuses on central objects in the middle, such as boats, vases, buses, food or furniture.

"a robotic dog. a robot in the shape of a dog" | Video: Google

"bouquet of flowers sitting in a clear glass vase" | Video: Google

"a boat on the water tied down to a stake" | Video: Google

Similar to DALL-E, Dream Fields can mix object categories that are difficult to match in reality. DALL-E produced images of chairs made from avocados or penguins made from garlic. Dream Fields generates 3D views of avocado chairs or teapots made from Pikachu.

"an archair in the shape of a ____. an archair imitating a ____." | Video: Google

"a teapot in the shape of a ____. a teapot imitating a ____." | Video: Google

Google hopes that these methods will enable faster content creation for artists and multimedia applications. The researchers also tested a variant using a CLIP alternative, which allowed them to generate higher-resolution objects.

More examples and information are available on the Dream Fields project page. The code has not been published yet.