Roblox GPT-3 demo shows how AI can change game interactions

Google's SayCan controls robots through a large language model. IBM developer James Weaver is now applying this approach to non-player characters in the sandbox game Roblox.

Large language models such as OpenAI's GPT-3 or Google's PaLM can translate and complete texts, conduct dialogs, or generate code. Although far from all the models' emerging abilities have been explored, AI researchers are already combining them with external modules for accessing Python, with physics simulators, and using them in CICERO for diplomacy games or for inner monologue in robots.

Google's AI division combined current robotics technology with PaLM in the summer of 2022. In PaLM-SayCan, a language model converts text input from users into robot instructions, enabling follow-up questions and promoting more flexible robot behavior.

Google's SayCan uses a language model for more robot flexibility

SayCan from Google uses chain of thought prompting to generate potential steps for carrying out an instruction and then evaluates their probability of success. The robot executes the chain of actions that the language model assigns the highest probability of success.

The advantage here is that the robot accepts instructions in natural language. If someone asks for an energy-rich snack, the robot will preferentially give this person an energy bar. If this is not available, the robot will recognize an apple or a sugar drink as a suitable alternative.

Roblox demo is Google's SayCan for NPCs

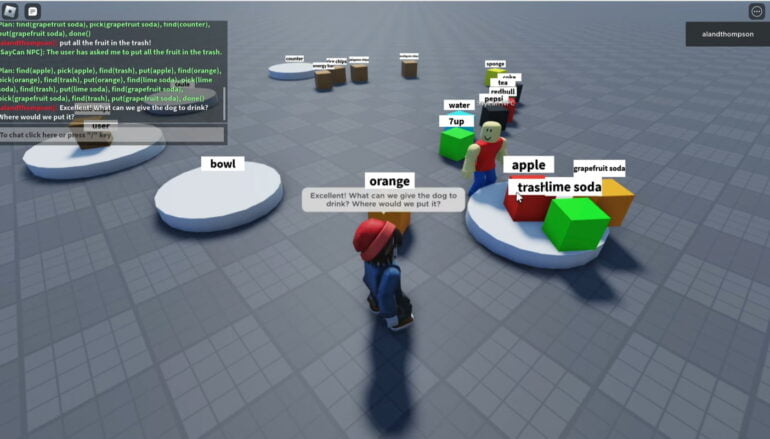

IBM developer James Weaver now shows in a Roblox demo how the concept behind SayCan can be used for more flexible NPCs. Weaver uses GPT-3 for his implementation, so anyone who wants to test the demo must have an OpenAI API key ready.

In the sandbox, players can then use a chat window to give instructions to an NPC, such as, "Put the rice chips in the bowl and then put the tea on the table.

All objects in the demo are simple boxes or round tables, named according to their role. The NPC follows the object names and executes the commands. For this purpose, GPT-3 converts the natural language commands into robot commands in the background, for example into

find(rice chips), pick(rice chips), find(bowl), put(rice chips), find(tea), pick(tea), find(table), put(tea), done() .

The demo is available on the Roblox website. You can find additional information on Weaver's GitHub.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.