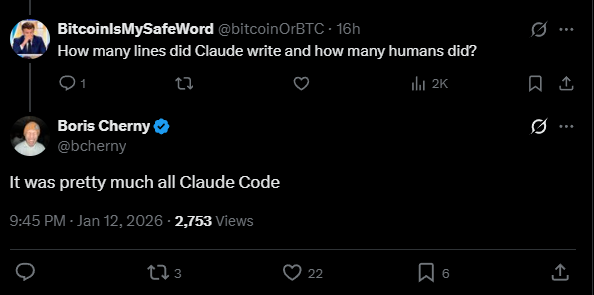

Anthropic's Claude Code inventor says his tool wrote almost all the code for Claude Cowork. Claude Cowork is a newly launched AI tool from Anthropic that builds on Claude Code but adds a user-friendly interface for non-programmers. According to Claude Code inventor Boris Cherny, "pretty much" all the code was generated using Claude Code.

Product Manager Felix Rieseberg says the app came together in just a sprint and a half, roughly one and a half weeks. The team had already built some prototypes and explored ideas beforehand, though, and the current release is still a research preview with a few rough edges, Rieseberg says. Claude Code also provided an extensive foundation to build on; Rieseberg is likely referring mainly to the front-end work.