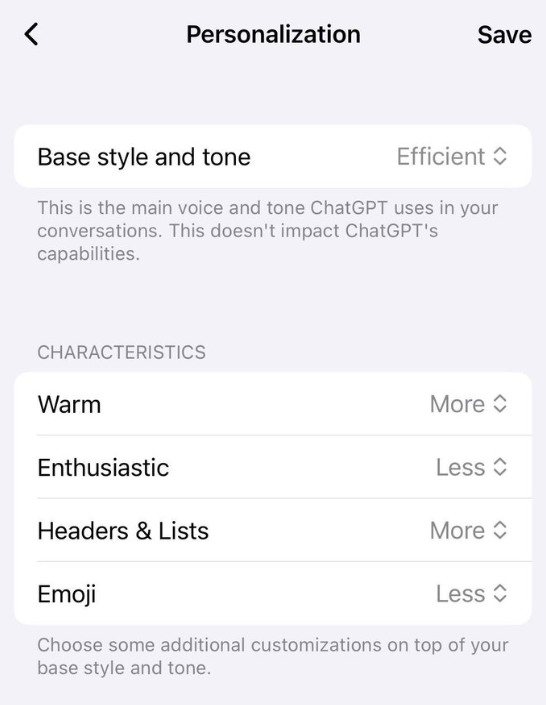

OpenAI's advertising plans for ChatGPT are taking shape. According to The Information, employees are discussing various ad formats for the chatbot. One option would have AI models preferentially weave sponsored content into their responses. So a question about mascara recommendations might surface a Sephora ad. Internal mockups also show ads appearing in a sidebar next to the response window.

Another approach would only show ads after users request further details. If someone asks about a trip to Barcelona and clicks on a suggestion like the Sagrada Familia, sponsored links to tour packages could appear. A spokesperson confirmed to The Information that the company is exploring how advertising might work in the product without compromising user trust.

OpenAI CEO Sam Altman has previously called AI responses shaped by advertising a dystopian future—especially if those recommendations draw on earlier, private conversations with the chatbot. Yet that appears to be precisely what OpenAI is now working on: advertising powered by ChatGPT's memory function, which could tap into personal conversation histories for targeted ads.